In this case, delete the output file and re-execute the job. If output directory already exists, map reduce job will fail with .FileAlreadyExistsException. Third argument is jar file which contains class file. Run mapreduce program /job with below command. Create input test file in local file system and copy it to HDFS.

#Download spark and run wordcount example how to

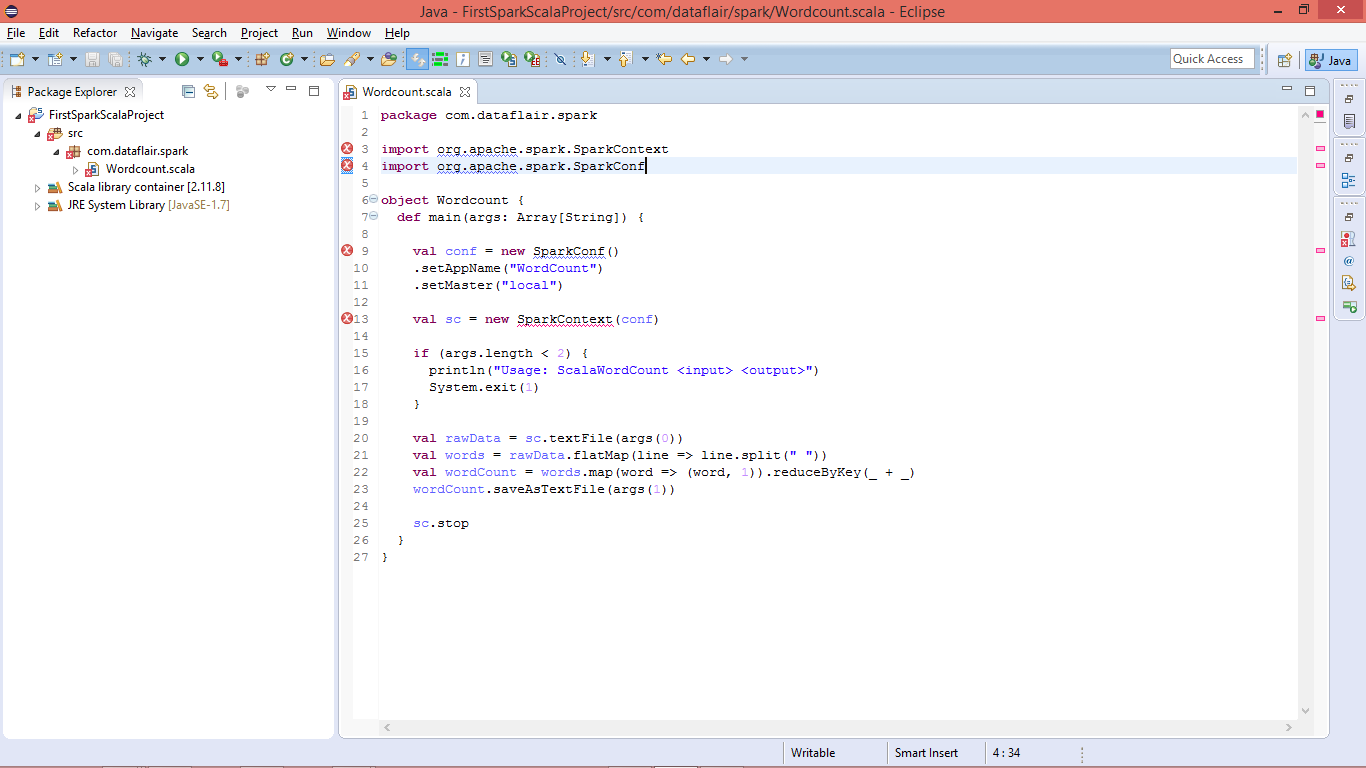

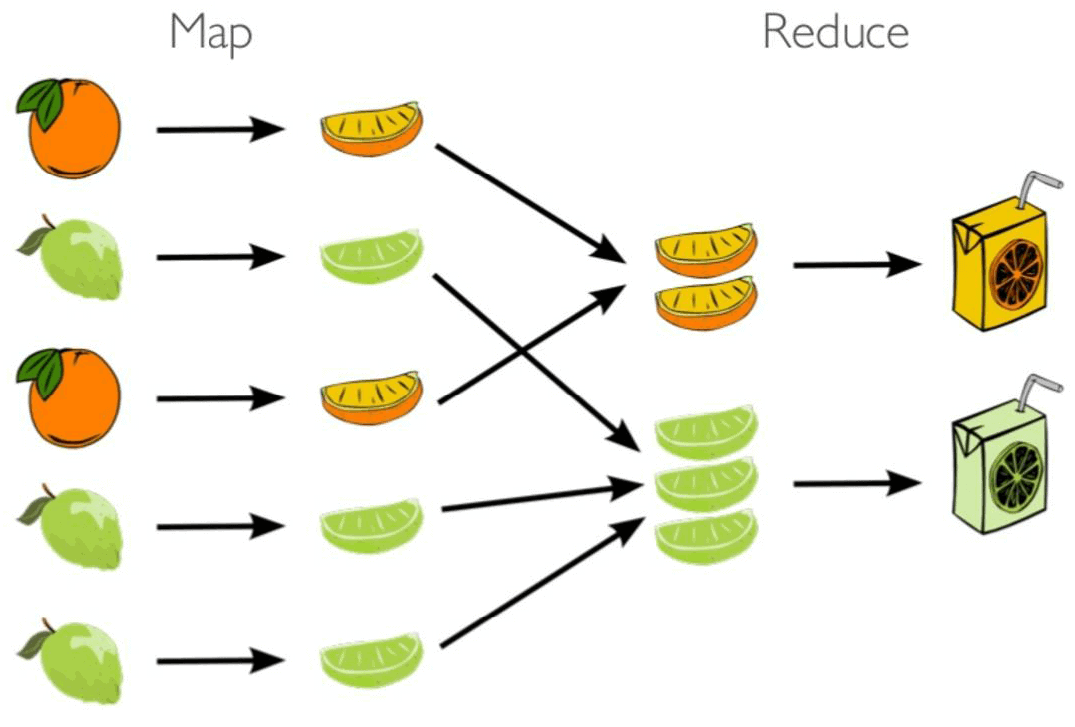

Please refer this post to know how to browse through HDFS & Job tracker using Web User Interface. In this demonstration, we will consider wordcount mapreduce program from the above jar to test the counts of each word in a input file and writes counts into output file. Job execution and outputs can also be verified through web interface. .example shows you how to SSH into your projects Cloud Dataproc cluster master node, then use the spark-shell REPL to create and run a Scala wordcount. Actual output content is written into these part files.

So, the number of part output files will be equal to the number of reducers run as part of the job. Choose a Spark release: 3.2.1 (Jan 26 2022) 3.1.2 (Jun 01 2021) 3.0.3 (Jun 23 2021) Choose a package type: Pre-built for Apache Hadoop 3.3 and later Pre-built for Apache Hadoop 3.3 and later (Scala 2.13) Pre-built for Apache Hadoop 2.7 Pre-built with user-provided Apache Hadoop Source Code.

Otherwise file already exists exception “ .FileAlreadyExistsException: Output directory hdfs://localhost:9000/test/output already exists ” will be thrown.ģ. This output directory should not be present before running the map reduce job.

Last argument is directory path under which output files will be created.Fifth argument is path for input data set.Fourth argument is name of the public class which is driver for map reduce job.Third argument is jar file which contains class file (wordcount.class) for wordcount program.$ hadoop jar $ HADOOP_HOME / share / hadoop / mapreduce / hadoop - mapreduce - examples - 2.3.0.jar wordcount / user / data / intest.